Picture this: you finish a teletherapy session, rich with layered family dynamics, unspoken tensions, and a breakthrough moment in relational co-construction, and open the electronic health record to chart. But the platform has already been at work. An AI-generated summary sits there, neatly formatted, highlighting symptoms, diagnoses, and suggested next steps. It asks for your review, edits, approval; it even asks you to rate how well it did.

No one was told

Neither you nor your patient were explicitly told this would happen. There is no extra time allocated or compensation for this new layer of oversight. At some clinics, a simple sign posted mentions an “AI scribe,” but it rarely details what the technology does, how data flows, or whether patients can opt out.

This is already happening

This is not a hypothetical future scenario; it is happening now in many large provider networks and electronic health record systems. As marriage and family therapists, we are trained to see systems, relationships, and people as people. Yet here is an additional participant in the chart: a computerized interpretation according to medical models that differ from our systemic models. The AI is trained primarily on intrapsychic, medicalized, diagnostic approaches. Even at that, these models are not scoring against standardized testing with clinical scores, thresholds, and cut-offs. There is no setting to select a specific therapy model, let alone a systemic or relational model. It mainly focused on different note formats to produce cognitive–behavioral or medical notes.

Convenience is real

What might this mean for our work? On one hand, the convenience is real. For providers managing high-volume caseloads and administrative demands, the tools free cognitive bandwidth for attunement and improvisation in-session. Yet the summaries flatten nuance. Subtle relational bids, nonverbal cues, and systemic context may be reframed in individual-focused diagnostic terms, raising curiosity about what is gained—and what is lost.

These tools highlight convenience. Yet they raise questions. How well do their templates capture interactional patterns or systemic dynamics? Do multi-speaker features truly preserve family dynamics, or do they still lean toward individual-focused summaries?

Large-scale rollouts and what they reveal

Large healthcare systems provide useful insights into how ambient AI documentation tools are performing in real clinical environments. For example, Kaiser Permanente’s implementation of an ambient AI scribe powered by Abridge was evaluated across 7,260 physicians and over 2.5 million patient encounters between October 2023 and December 2024. In that system, providers saved nearly 16,000 hours of documentation time, with adoption highest in mental health, emergency medicine, and primary care. Surveys from this implementation found that 88% of those providers reported improved visit interactions, patients noticed providers spent less time looking at screens, and two thirds felt comfortable with the technology in use (Tierney et al., 2025; see AMA report on Kaiser data).

Providers reported that AI-generated drafts occasionally contained clinically relevant inaccuracies, particularly omissions or pronoun errors, and one mild patient safety event occurred, underlining the importance of provider review and clinical responsibility.

Controlled clinical research supports the idea that AI scribes can reduce administrative burden while also highlighting areas that require active oversight. In a randomized trial, 238 outpatient physicians across 14 specialties were assigned to use one of two ambient AI scribe tools or continue their usual documentation practices. Across about 72,000 patient encounters, providers using one of the AI tools experienced a roughly 9.5% reduction in note writing time compared with usual care. The second tool in the trial showed smaller, non-significant changes in documentation time. Both tools were associated with modest improvements in validated measures of burnout, cognitive workload, and work exhaustion relative to control. Providers reported that AI-generated drafts occasionally contained clinically relevant inaccuracies, particularly omissions or pronoun errors, and one mild patient safety event occurred, underlining the importance of provider review and clinical responsibility (Lukac et al., 2025).

These findings suggest real benefits for high-volume practices. Yet they prompt curiosity. How might similar voluntary, privacy-focused models, where patients are informed and outputs are clinician-reviewed, translate to independent teletherapy practices?

What gets lost

Yet convenience invites trade-offs worth examining. AI tends to flatten nuance: subtle relational bids, non-verbal cues visible only on video, cultural idioms, or shifts in power dynamics within a family system may be overlooked or reframed in diagnostic terms. A session focused on an acute situational stressor, perhaps a job loss affecting the entire household, might be summarized with emphasis on individual anxiety or depression, while the broader systemic context fades. How faithfully can a tool trained on medical models capture the co-constructed meaning that defines much of our practice? And what happens when those summaries become part of the record that insurers, supervisors, or quality reviewers see first?

These questions grow more pressing when we consider how AI outputs intersect with administrative and reimbursement processes. Summaries that pre-highlight certain diagnoses or structure notes in standardized formats might influence coverage decisions, utilization reviews, or treatment authorizations. If relational context is minimized, might that affect how progress is measured or justified? It is not that these outcomes are guaranteed, but they are plausible enough to warrant reflection.

Errors, omissions, and hallucinations

AI-generated outputs can include errors, omissions, or hallucinations. Hallucinations refer to fabricated content that appears plausible but has no basis in the source material. Overall error rates in some AI-generated notes range from 1% to 3% (Topaz et al., 2025). Even low error rates pose risks in healthcare, where small inaccuracies can affect patient safety. Other issues include critical omissions of symptoms or concerns, misinterpretations of context, and speaker attribution errors in multi-speaker settings. Baseline automatic speech recognition systems often begin around 7% word error rate, with higher rates for certain accents, dialects, or demographic groups such as African American speakers (Martin & Wright, 2023; Mengesha et al., 2021). AI-generated notes can also reflect biases in their training data, sometimes misrepresenting anatomy, demographics, or clinical details, which can introduce errors beyond simple transcription mistakes (Mess et al., 2025). These limitations are especially pronounced in mental health care, where nuance matters and nonverbal cues or social determinants of health may be missed (Topaz et al., 2025).

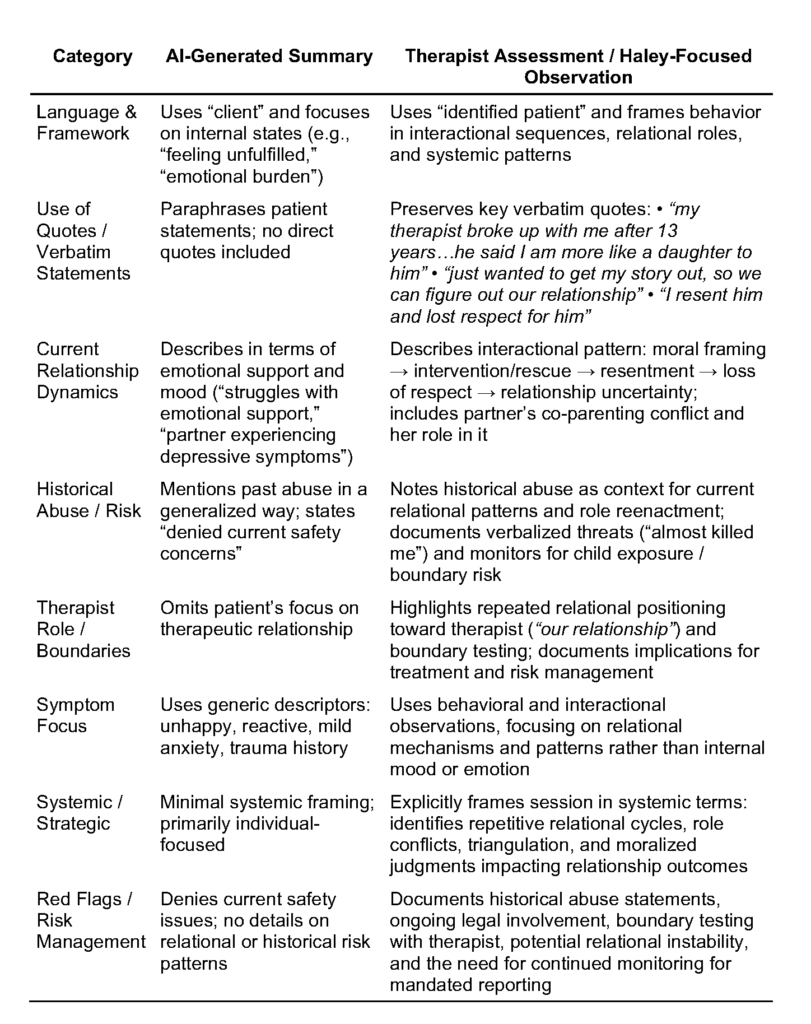

Comparing AI-generated summaries with clinician documentation:

A case-based observation

In reviewing AI-generated documentation from an actual teletherapy session, I observed significant divergences between the automated summary and the notes I authored as the treating therapist. The patient’s verbalizations, relational positioning, and interactional patterns were often flattened or omitted in the AI summary. In contrast, my documentation preserved verbatim statements, contextualized historical trauma, and captured the cyclical dynamics in her relationships, consistent with strategic family therapy principles (Lockhart, 2025a, 2025b). The following analysis highlights these differences and their implications for clinical accuracy, risk management, and fidelity to a systemic therapeutic model.

Reviewing this case highlights how AI-generated summaries can obscure clinically meaningful information, particularly when evaluated against strategic family therapy. The AI summary reduces complex interactional sequences to internal states, generalized emotional descriptors, and paraphrased observations. In contrast, my documentation focuses on the relational system, preserving verbatim statements and mapping cyclical patterns of positioning, intervention, and relational escalation that structure the patient’s relationships.

Verbatim statements such as “my therapist broke up with me after 13 years…he said I am more like a daughter to him” and “just wanted to get my story out, so we can figure out our relationship” reveal recurring relational patterns with authority figures and partners. These quotes also document the patient’s orientation toward me in-session, highlighting dependency, boundary testing, and the co-construction of meaning. These dimensions are entirely absent from the AI summary. Preserving these observations aligns with strategic documentation principles, emphasizing interactional sequences and therapist–identified patient relational dynamics (Lockhart, 2025a; Lockhart, 2025b).

Separately, risk and boundary monitoring require explicit attention. While the AI summary notes that the patient “denied current safety concerns,” my assessment situates historical abuse statements, parenting responsibilities, and repeated boundary testing as relational red flags requiring ongoing attention (Lockhart, 2025c). Including these observations ensures clinical accountability, supports mandated reporting obligations, and documents how the patient enacts patterns in-session, including toward the therapist as an observing participant. Bateson (1972) explicitly argued that “the observer must be included in the field of observation”, meaning observation cannot be separated from the observer’s involvement in the system.

Together, these examples underscore that AI-generated summaries, while convenient, fail to capture therapist–patient relational data, co-constructed meaning, and the observer effect, all of which are critical for clinically defensible assessment and intervention planning. Maintaining verbatim records of these interactions preserves the relationally grounded, strategic perspective essential for effective treatment.

Clinical safety and AI errors

Recent research has examined how often AI-generated clinical notes make mistakes and how serious those mistakes can be. In a study of 450 primary care transcript-note pairs, hallucinations occurred in roughly 1.5% of cases, with nearly half considered major, while omissions appeared in about 3.5%, with a smaller proportion rated as major (Asgari et al., 2025). Risk was highest in sections such as the “Plan,” where AI sometimes invented next steps or reversed intended meanings. Adjustments to prompts, output review, and revision processes significantly reduced major errors, yet human oversight remained essential. These findings highlight that AI can shape clinical understanding in subtle ways, particularly in relational or systemic contexts, and underscore the importance of safeguards such as clinician review and vendor feedback loops (Asgari et al., 2025).

These studies underscore that AI is powerful but imperfect. Errors can subtly shape how providers understand a session, particularly in relational or systemic contexts where nuance matters. They also raise questions: What safeguards, like clinician review or vendor feedback loops, are needed? How might repeated exposure to AI framing influence our own thinking over time?

What patients see and feel

Patients do not always see AI directly, but they notice its effects when it changes how providers interact with them. In the Kaiser roll-out, nearly half of patients said their provider spent less time looking at the computer during visits, and many noticed that providers seemed more engaged in direct conversation (AMA, 2025; Tierney et al., 2025). Two‑thirds of responding patients reported feeling comfortable with the use of the technology, and more than half felt it had a positive impact on the quality of their visit; a smaller share were neutral or somewhat uncomfortable with it. Neither survey reported any negative effect on visit experience overall.

This feedback suggests that when documentation burdens are reduced, patients can experience visits as more focused and relational. At the same time, these data do not capture how discussing AI directly with patients may influence trust or comfort in the therapeutic relationship. That invites reflection in clinical practice. How does introducing AI into a session affect rapport and the sense of safety for patients? Could offering clear opt‑in or opt‑out choices empower patients to feel more in control of their information? Or might overt discussion of AI tools create unintended distance at the very moment providers aim to deepen connection?

The session before the session

Looking ahead, we might ask: How could deeper integration change the landscape? Some systems already suggest treatment directions or flag deviations from care plans. Could future versions generate pre-session alerts that could shape the session before it even begins? Would such prompts support or constrain our clinical judgment? And if AI begins to “learn” from our corrections, whose model ultimately guides the narrative, the provider’s orientation, expertise, and humanity, or the platform’s default settings?

Consent, control, and the record

These possibilities bring us to the heart of the matter: consent, control, and the integrity of documentation. The latest AAMFT Code of Ethics (2026) emphasizes informed consent and confidentiality while calling on providers to use technology responsibly. Standard 6.3 requires providers to secure consent before offering care through technology and to give patients clear information about potential risks, and Standard 1.11 addresses consent for recording or third-party observation. Even though the code does not explicitly mention these standards apply to emerging tools that process data or generate documentation.

When AI participates in documentation, control over information can become unclear. Patients may not realize their words are being processed by an algorithm or that they could opt out, and providers may not fully understand how the data is stored, how vendor systems are trained, or how outputs could be used beyond the immediate record. These factors can affect the quality of care and the trust patients place in providers.

Such considerations highlight ongoing questions for practice. How can providers integrate AI tools while maintaining patient consent and confidentiality? What guidance should professional organizations offer for ethical AI use? How can providers retain oversight and ensure accountability when sensitive patient information is processed by automated systems?

Verbal discussion helps, too, plain language that builds trust rather than legal jargon.

Disclosure still matters

Confidentiality and informed consent remain foundational. AI does not absolve us of responsibility to protect sensitive information or ensure documentation reflects the therapeutic reality. It simply adds a layer requiring greater intentionality. Many expert therapists (including myself) now recommend explicit, written disclosure during intake or via an addendum: explaining what the AI does. For example, drafts notes as a scribe, not as a diagnostician, that all outputs are reviewed and edited by the human provider, that consent is voluntary and revocable, and how data is handled, compliant with HIPAA and HITECH, no use for public model training, audio deleted after processing. Verbal discussion helps, too, plain language that builds trust rather than legal jargon.

The chart still decides

Ultimately, patient charts remain the authoritative record of our collaborative work. AI-generated summaries are auxiliary tools, helpful in some ways, limited in others. Are they replacing the clinical judgment, relational attunement, and systemic curiosity that make family therapy effective?

Questions to pause on

So, as marriage and family therapists, we might pause and reflect:

- How does the presence of AI summaries influence the way I read my own notes or conceptualize cases over time?

- In what ways might this technology amplify or obscure the relational and contextual elements that are central to our model?

- How can I ensure patients understand and consent to AI’s role without eroding the trust that underpins our alliance?

- What boundaries or safeguards would help me maintain control over the narrative of care?

- If convenience saves time, what do I do with that reclaimed energy, deepen presence in sessions, or simply manage more volume?

These are not questions with easy answers. They are invitations to examine how emerging tools align with our values and to shape their use in ways that honor the complexity of human relationships.

Ezra N. S. Lockhart, PhD, LMFT, is an AAMFT professional member holding the Clinical Fellow and Approved Supervisor designations, and associate professor of Marriage and Family Therapy at National University. He is ethics chair for LAMFT and writes at the nexus of clinical practice, technology, and ethics.

American Association for Marriage and Family Therapy [AAMFT]. (2026). AAMFT Code of Ethics (effective January 1, 2026). https://www.aamft.org/AAMFT/Legal_Ethics/Code_of_Ethics.aspx

American Medical Association [AMA]. (2025, June 12). AI scribes save 15,000 hours—and restore the human side of medicine. AMA News Wire. https://www.ama-assn.org/practice-management/digital-health/ai-scribes-save-15000-hours-and-restore-human-side-medicine

Asgari, E., Montaña-Brown, N., Dubois, M., Pimenta, D., & others. (2025). A framework to assess clinical safety and hallucination rates of LLMs for medical text summarisation. npj Digital Medicine, 8, 274. https://doi.org/10.1038/s41746-025-01670-7

Bateson, G. (1972). Steps to an ecology of mind: Collected essays in anthropology, psychiatry, evolution, and epistemology. Chandler Publishing.

Lockhart, E. N. S. (2025a). Jay Haley’s model of strategic family therapy: An epistemological inquiry. Philosophy, Psychiatry & Psychology, 32(4), 407–434. https://doi.org/10.1353/ppp.2025.a978088

Lockhart, E. N. S. (2025b). Mentorship and clinical supervision through Haley’s strategic model: A composite case study in legal literacy. Journal of Systemic Therapies, 43(3), 1–19. https://doi.org/10.1521/jsyt.2024.43.3.01

Lukac, P. J., Turner, W., Vangala, S., Chin, A. T., Khalili, J., Shih, Y. C. T., … & Mafi, J. N. (2025). Ambient AI scribes in clinical practice: a randomized trial. The New England Journal of Medicine AI, 2(12), AIoa2501000. https://doi.org/10.1056/AIoa2501000

Martin, J. L., & Wright, K. E. (2023). Bias in automatic speech recognition: The case of African American language. Applied Linguistics, 44(4), 613–630. https://doi.org/10.1093/applin/amac066

Mengesha, Z., Heldreth, C., Lahav, M., Sublewski, J., & Tuennerman, E. (2021). “I don’t think these devices are very culturally sensitive.”—Impact of automated speech recognition errors on African Americans. Frontiers in Artificial Intelligence, 4, 725911. https://doi.org/10.3389/frai.2021.725911

Mess, S. A., Mackey, A. J., & Yarowsky, D. E. (2025). Artificial intelligence scribe and large language model technology in healthcare documentation: Advantages, limitations, and recommendations. Plastic and Reconstructive Surgery Global Open, 13(1), e6450. https://doi.org/10.1097/GOX.0000000000006450

Tierney, A. A., Gayre, G., Hoberman, B., Mattern, B., Ballesca, M., Wilson Hannay, S. B., … & Lee, K. (2025). Ambient artificial intelligence scribes: Learnings after 1 year and over 2.5 million uses. The New England Journal of Medicine Catalyst Innovations in Care Delivery, 6(5), https://doi.org/10.1056/CAT.25.0040

Topaz, M., Peltonen, L. M., & Zhang, Z. (2025). Beyond human ears: Navigating the uncharted risks of AI scribes in clinical practice. npj Digital Medicine, 8(1), 569. https://doi.org/10.1038/s41746-025-01895-6

Other articles

Autoimmune Neuropsychiatric Disorders in Children

This article provides an overview of Pediatric Acute-Onset Neuropsychiatric Syndrome (PANS) and Pediatric Autoimmune Neuropsychiatric Disorders Associated with Streptococcal Infections (PANDAS): A Beginner’s Guide for Mental Health Providers and Allied Professionals

Jerrod Brown, PhD, Jeremiah Schimp, PhD, Shelley Mydra, DMFT, Jenenne Valentino-Bottaro, PhD, Leanne Skehan, DCN, Bettye Sue Hennington, PhD, Kristy Donaldson, PhD, and Jennifer Sweeton, JD

Beyond Remission: How Marriage and Family Therapists Support Oncology Survivorship

The completion of active cancer treatment is often associated with anticipated relief for most individuals and families. Appointments are spaced out, side effects subside, and crisis-related language is eased. However, for many survivors and their loved ones, this phase is characterized by less finality and more ambiguity.

Zahra Muse, MS

How Can Marriage and Family Therapists Help Racially and Ethnically Minoritized Individuals Navigating Online Dating?

For many, dating app platforms represent hope and possibility. For racially and ethnically minoritized individuals, however, the experience is often far more complicated.

Eman Tadros, PhD, Annemarie Sohn, MA, & Jixuan Zhao, MSW